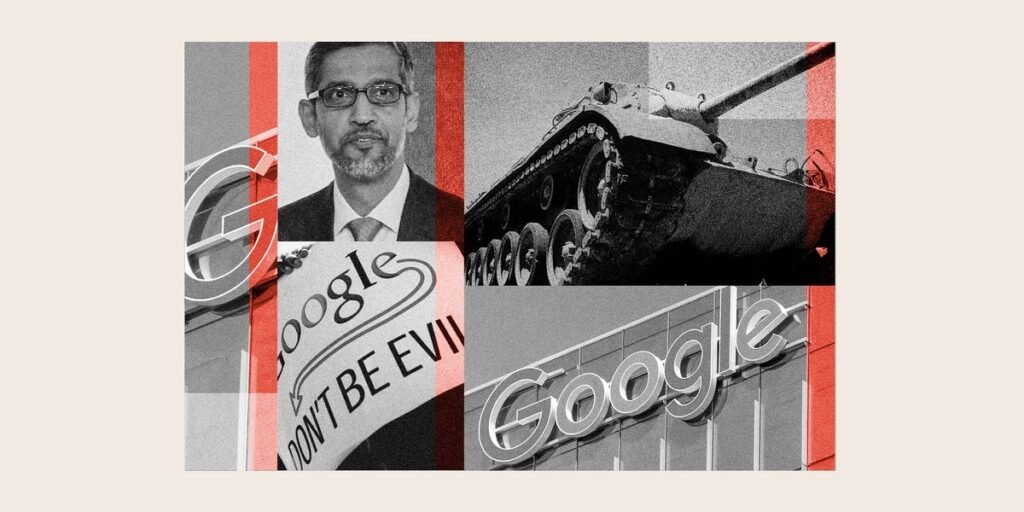

On April 27, more than 600 Google employees signed a letter urging CEO Sundar Pichai to prevent the Pentagon from using the company’s AI products for classified operations.

Google had spent the previous months building a closer relationship with the US Defense Department after a few frosty years. In 2018, more than 4,000 Googlers sent a letter imploring Pichai to cancel Project Maven, a contract that used Google’s AI to analyze drone footage. Google chose not to renew the contract, and drew up a set of company-wide principles that included a pledge not to use its AI for military or surveillance.

The language in the two employee letters — sent eight years apart — was strikingly similar. Both, in six paragraphs, warned of “irreparable damage” to Google’s reputation. Both argued that Google would ultimately not be able to control how the Pentagon uses the technology. “We believe that Google should not be in the business of war,” the 2018 letter began. “We want to see AI benefit humanity; not to see it being used in inhumane or extremely harmful ways,” employees wrote in the letter this past week.

This time, Googlers received a different response, one that marked just how much has changed at the company and across Silicon Valley in the past few years. Google had gone ahead and signed the deal.

Defense is no longer a boogeyman in the tech industry. As the second Trump administration raises defense spending to modernize warfare, tech companies vying for artificial intelligence dominance are clamoring for lucrative government contracts that may define AI’s winners and losers. Last year, Google removed its pledge to not use AI for weapons; the company has since ramped up contracts for its AI and cloud products with the Defense and Homeland Security departments and with several governments of US allies. “This is an area we’re going to be leaning more into. We’re talking with governments about their national security concerns,” Tom Lue, Google DeepMind’s VP of global affairs, told staff at a town hall in January. On Friday, the Defense Department announced that, in addition to Google, it had completed agreements with six tech companies — Amazon, Microsoft, Nvidia, OpenAI, SpaceX, and startup Reflection AI — to use their AI for classified projects.

“We are proud to be part of a broad consortium of leading AI labs and technology and cloud companies providing AI services and infrastructure in support of national security,” a Google spokesperson said in a statement to Business Insider. “We remain committed to the private and public sector consensus that AI should not be used for domestic mass surveillance or autonomous weaponry without appropriate human oversight.”

As Google aims to keep pace in the military race, employees say it’s grown more militant toward dissent.

Internally, the company has clamped down on political discussions by banning the use of certain topics and words among its workforce in internal message boards, including “ICE” and “genocide.” Staff say the freewheeling culture that once defined “Googliness” is long gone as leaders have attempted to quell employee activism.

While many employees approve of the company’s embrace of national security — just 600 of the company’s nearly 195,000 employees signed the most recent letter — some Googlers say they feel both more vigilant and less powerful to question leadership.

“It’s a shame that one of the biggest and brightest tech companies avoided having an honest discussion about this,” Andreas Kirsch, a senior researcher at Google DeepMind, tells Business Insider of the company’s decision to sign the Pentagon contract this week. He also posted to X: “I personally feel incredibly ashamed right now to be Senior Research Scientist at Google DeepMind.”

“If we want to be an ethical company, transparency is a huge part of that,” says Varden Wang, an AI engineer at Google. “I would like to see stronger defining principles. For leaders to say: This is what the company values and believes in.”

In 2018, Google was perhaps the last Silicon Valley company that still carried the weight of its own mythology. To work at Google was to exhibit ideals of “Googliness.” Employees were encouraged to speak up when they were unhappy. Staff would discuss sometimes prickly political topics in email lists. And there was the most sacred mantra: Don’t be evil.

“No other company was as high on its own ability to solve the world’s problems,” says Claire Stapleton, who worked at Google from 2007 to 2019 and was a key organizer of the 2018 employee walkouts over the company’s handling of sexual harassment cases.

Pichai and Google cofounder Sergey Brin criticized policies of the first Trump administration. Brin, who called Trump’s 2016 election “deeply offensive,” was seen protesting Trump’s travel ban at San Francisco International Airport in 2017. (In Trump’s second term, both men have attended White House dinners, during one of which Trump talked about Brin’s “MAGA girlfriend.”

The end of the last decade was a demarcation point at Google. The workforce was riled by internal fracas, including over Project Maven, concerns over a search engine that Google was secretly building for China, and accusations that a senior executive’s sexual misconduct had been swept under the rug. In 2018, around 20,000 Googlers walked out following a New York Times report that Google had given Android leader Andy Rubin a $90 million exit package after finding sexual misconduct claims against him to be credible (Rubin denied the allegations). Leadership encouraged the protest. “Sundar sent an email before the walkout saying if you want to participate in this, go for it,” says Stapleton.

Soon after, Google’s culture of openness began to close. In 2019 — the same year founders Brin and Larry Page stepped away from the company — Google banned political discussions on internal forums and mailing lists, which are now flagged by what is known as the Internal Community Management Team (ICMT). Today, topics such as ICE are off limits, and two employees said that describing the Gaza conflict as a “genocide” is forbidden.

Workers who had been around a long time and believed in that ‘don’t be evil’ motto perceived a lot of potential for violence and misery to come from that contract.Matthew Tschiegg, a software engineer at Google since 2014

“All throughout last year, we had this tension with the moderation team because we’re not allowed to use the word genocide because it’s distressing and political, which makes it a little difficult to talk about the implications of your software,” says Matthew Tschiegg, a software engineer at Google since 2014.

In an email circulated among an employee resource group in January, employees said their ability to speak openly about hot-button issues was being “increasingly undermined” by Google’s internal moderation policies.

“I think we are at a critical point in Google’s history,” read the email. “Leadership is testing increasingly stringent policing of speech. By not speaking about it we are giving in to a culture of fear which makes it even more difficult for Googlers to ‘do the right thing’ and ‘challenge the status quo’.”

Company all-hands events — where employees once would gather over IPAs, discuss the week’s achievements, and throw hardballs at leaders — are now more sanitized and filled with corporate speak, employees say. Questions submitted by staff at some of the larger town halls, such as the company’s once-weekly, now-monthly “TGIF,” are now often summarized using an AI tool, which has a habit of sanding down the edges of more confrontational submissions. One employee said there was recently a question submitted about Google’s work with ICE, Customs and Border Protection, and the Department of War; at the town hall, the question had been reframed to ask about Google’s work with government agencies in general. A Google spokesperson said that the tool can produce AI summarizations when there are a number of similar questions, which allows leaders to address more topics. They said that they’ve seen Googlers asking more questions since introducing the feature.

An internal document for the AI tool, which was codenamed “Project Saturday” and now named “Ask”, states that moderators are also able to reword the text of the questions. Several employees pointed to this as an example of how Google has curbed open speech internally.

“There’s been a huge shift where people are relying on external reporting to understand how their work is used,” said a Google DeepMind employee.

Several other Google employees echoed that they’re increasingly relying on news reporting to learn about internal projects. One particularly volatile flashpoint cited was Project Nimbus, a $1.2 billion Google and Amazon deal signed in 2021 to supply the Israeli government with cloud services. After the war in Gaza broke out in 2023, some employees became concerned over how the company might be aiding Israel’s military. The tensions came to a head in 2024 when Google fired 50 employees over a sit-in protest.

Tschiegg says a lot of Google employees got re-engaged with activism because of Project Nimbus. “Workers who had been around a long time and believed in that ‘don’t be evil’ motto perceived a lot of potential for violence and misery to come from that contract,” he says.

Alex Wong/Getty Images

Google says these contracts involve administrative workloads and not its AI being used for military or surveillance purposes. Some inside the company worry that Google might not ultimately be able to enforce those red lines. Last year, The Intercept reported that internal documents showed Google executives acknowledging they would be unable to fully monitor or control how the Israeli government used its technology. Earlier this year, The Washington Post reported a story about a whistleblower who claimed that Google had assisted the Israel Defence Forces in improving its AI’s reliability in identifying objects such as drones and soldiers.

“There are so many unanswered questions,” says Alex, a software engineer at Google who requested he only be referred to by his first name. He said there had still not been any transparency on Google’s work with the Israeli government. “Project Nimbus was signed in 2021 and we only learned about military applications from news sources.”

Google’s willingness to engage with classified Pentagon work caught some employees in its AI division by surprise. Leaders at DeepMind, the AI lab Google acquired in 2014, were once so worried about such a scenario that they tried — and failed — to create a firewall that would prevent Google using its technology for surveillance or autonomous weapons.

In February, amid a standoff between Anthropic and the Pentagon, more than 100 Google employees working on AI, including some in DeepMind, sent a letter to Jeff Dean, a chief scientist at the company, opposing the use of Google’s Gemini for the very applications DeepMind had once feared.

Following news this week that Google had signed a deal with the Pentagon for classified operations, some employees across the company proposed a strike action, but paused for fear of possible retaliation, according to a person familiar with the discussions.

Those fears have compounded as layoffs are now the norm across the tech industry. Google cut 12,000 employees in 2023 and has since made numerous cuts across the company. “There’s less worker security, the external job landscape is different,” says one Google DeepMind employee. Another tells me, “Employee leverage is just a little less clear.”

Tshiegg said there has been an effort among organizing employees to communicate with their peers at Amazon, Microsoft, and other tech companies. “We’re trying to put our heads together on how to meet this moment. Because frankly, there’s a real and impending sense of doom for folks working on these AI tools,” he said.

Others are taking a more pragmatic view about tech’s relationship with the Pentagon. “In today’s world I don’t see how an American company can avoid working with the US DOD, independent of what some of its employees feel,” says Caesar Sengupta, a vice president at Google from 2009 to 2021. “Whether it is good or bad is a second order point. At the end of the day companies are subject to the pressure of the country they are domiciled in.”

On Wednesday, two days after employees made their unsuccessful plea to leadership, Google reported its first-quarter financials. Pichai said AI was “lighting up every part of the business.” Anat Ashkenazi, the company’s chief financial officer, reeled off the numbers of another blockbuster quarter where profit jumped 81%. The Pentagon contract went unmentioned.

Ashkenazi signed off her prepared statement: “I want to take this opportunity to thank our employees for their contribution to our performance.”

Hugh Langley is a senior correspondent at Business Insider where he writes about Google, tech, and wealth.

Business Insider’s Discourse stories provide perspectives on the day’s most pressing issues, informed by analysis, reporting, and expertise.